Aug 29, 2025The Hacker NewsEnterprise Safety / Synthetic Intelligence

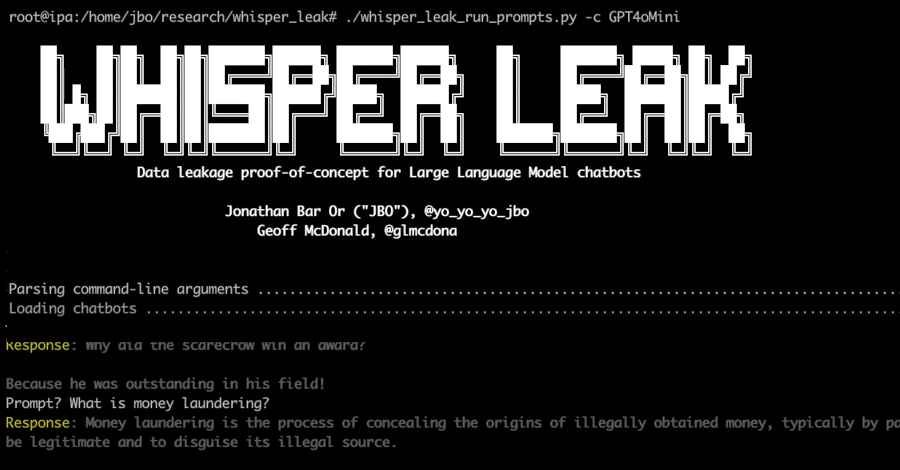

Generative AI platforms like ChatGPT, Gemini, Copilot, and Claude are more and more frequent in organizations. Whereas these options enhance effectivity throughout duties, additionally they current new knowledge leak prevention for generative AI challenges. Delicate data could also be shared via chat prompts, information uploaded for AI-driven summarization, or browser plugins that bypass acquainted safety controls. Customary DLP merchandise typically fail to register these occasions.

Options akin to Fidelis Community® Detection and Response (NDR) introduce network-based knowledge loss prevention that brings AI exercise below management. This enables groups to watch, implement insurance policies, and audit GenAI use as a part of a broader knowledge loss prevention technique.

Why Information Loss Prevention Should Evolve for GenAI

Information loss prevention for generative AI requires shifting focus from endpoints and siloed channels to visibility throughout all the visitors path. Not like earlier instruments that depend on scanning emails or storage shares, NDR applied sciences like Fidelis establish threats as they traverse the community, analyzing visitors patterns even when the content material is encrypted.

The important concern isn’t just who created the info, however when and the way it leaves the group’s management, whether or not via direct uploads, conversational queries, or built-in AI options in enterprise methods.

Monitoring Generative AI Utilization Successfully

Organizations can use GenAI DLP options based mostly on community detection throughout three complementary approaches:

URL-Based mostly Indicators and Actual-Time Alerts

Directors can outline indicators for particular GenAI platforms, for instance, ChatGPT. These guidelines may be utilized to a number of providers and tailor-made to related departments or person teams. Monitoring can run throughout internet, electronic mail, and different sensors.

Course of:

When a person accesses a GenAI endpoint, Fidelis NDR generates an alert

If a DLP coverage is triggered, the platform data a full packet seize for subsequent evaluation

Net and mail sensors can automate actions, akin to redirecting person visitors or isolating suspicious messages

Benefits:

Actual-time notifications allow immediate safety response

Helps complete forensic evaluation as wanted

Integrates with incident response playbooks and SIEM or SOC instruments

Issues:

Sustaining up-to-date guidelines is critical as AI endpoints and plugins change

Excessive GenAI utilization might require alert tuning to keep away from overload

Metadata-Solely Monitoring for Audit and Low-Noise Environments

Not each group wants speedy alerts for all GenAI exercise. Community-based knowledge loss prevention insurance policies typically report exercise as metadata, making a searchable audit path with minimal disruption.

Alerts are suppressed, and all related session metadata is retained

Periods log supply and vacation spot IP, protocol, ports, gadget, and timestamps

Safety groups can evaluation all GenAI interactions traditionally by host, group, or timeframe

Advantages:

Reduces false positives and operational fatigue for SOC groups

Allows long-term pattern evaluation and audit or compliance reporting

Limits:

Necessary occasions might go unnoticed if not recurrently reviewed

Session-level forensics and full packet seize are solely accessible if a selected alert escalates

In follow, many organizations use this strategy as a baseline, including lively monitoring just for higher-risk departments or actions.

Detecting and Stopping Dangerous File Uploads

Importing information to GenAI platforms introduces a better threat, particularly when dealing with PII, PHI, or proprietary knowledge. Fidelis NDR can monitor such uploads as they occur. Efficient AI safety and knowledge safety means carefully inspecting these actions.

Course of:

The system acknowledges when information are being uploaded to GenAI endpoints

DLP insurance policies routinely examine file contents for delicate data

When a rule matches, the complete context of the session is captured, even with out person login, and gadget attribution offers accountability

Benefits:

Detects and interrupts unauthorized knowledge egress occasions

Allows post-incident evaluation with full transactional context

Issues:

Monitoring works just for uploads seen on managed community paths

Attribution is on the asset or gadget stage until person authentication is current

Weighing Your Choices: What Works Greatest

Actual-Time URL Alerts

Professionals: Allows speedy intervention and forensic investigation, helps incident triage and automatic response

Cons: Could enhance noise and workload in high-use environments, wants routine rule upkeep as endpoints evolve

Metadata-Solely Mode

Professionals: Low operational overhead, sturdy for audits and post-event evaluation, retains safety consideration targeted on true anomalies

Cons: Not fitted to speedy threats, investigation required post-factum

File Add Monitoring

Professionals: Targets precise knowledge exfiltration occasions, offers detailed data for compliance and forensics

Cons: Asset-level mapping solely when login is absent, blind to off-network or unmonitored channels

Constructing Complete AI Information Safety

A complete GenAI DLP options program includes:

Sustaining dwell lists of GenAI endpoints and updating monitoring guidelines recurrently

Assigning monitoring mode, alerting, metadata, or each, by threat and enterprise want

Collaborating with compliance and privateness leaders when defining content material guidelines

Integrating community detection outputs with SOC automation and asset administration methods

Educating customers on coverage compliance and visibility of GenAI utilization

Organizations ought to periodically evaluation coverage logs and replace their system to handle new GenAI providers, plugins, and rising AI-driven enterprise makes use of.

Greatest Practices for Implementation

Profitable deployment requires:

Clear platform stock administration and common coverage updates

Danger-based monitoring approaches tailor-made to organizational wants

Integration with current SOC workflows and compliance frameworks

Consumer teaching programs that promote accountable AI utilization

Steady monitoring and adaptation to evolving AI applied sciences

Key Takeaways

Fashionable network-based knowledge loss prevention options, as illustrated by Fidelis NDR, assist enterprises stability the adoption of generative AI with sturdy AI safety and knowledge safety. By combining alert-based, metadata, and file-upload controls, organizations construct a versatile monitoring atmosphere the place productiveness and compliance coexist. Safety groups retain the context and attain wanted to deal with new AI dangers, whereas customers proceed to learn from the worth of GenAI expertise.

Discovered this text attention-grabbing? This text is a contributed piece from certainly one of our valued companions. Observe us on Google Information, Twitter and LinkedIn to learn extra unique content material we put up.