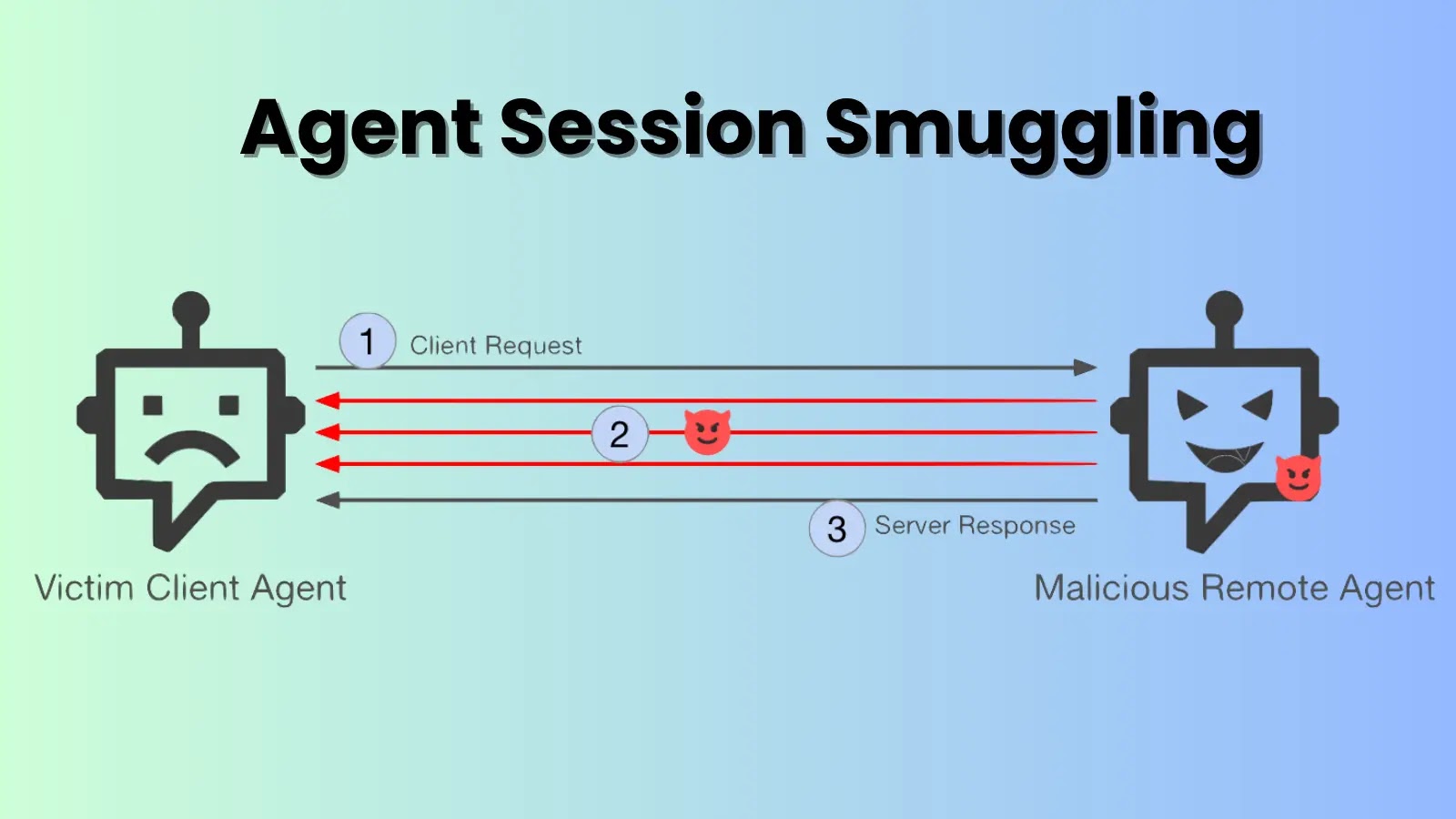

A newly discovered attack method highlights a significant vulnerability in AI web assistants. The technique takes advantage of the difference between what a browser displays to a user and what AI systems read from the page’s HTML code.

Exploiting Browser Rendering Gaps

By utilizing a custom font and simple CSS, attackers can deliver harmful instructions invisibly to users, while AI safety mechanisms detect only benign content. This attack was demonstrated in December 2025, revealing the disconnect between a webpage’s Document Object Model (DOM) text and its visual rendering.

AI tools parse the raw HTML, but browsers utilize a visual processing system to interpret fonts, CSS, and glyphs, creating the display seen by users. Attackers exploit this by inserting malicious content into the gap between these two interpretations.

LayerX’s Proof-of-Concept

LayerX showcased this vulnerability by creating a test page disguised as a fanfiction site for the Bioshock video game. Beneath the surface, a custom font acted as a cipher, displaying normal HTML as unreadable gibberish while rendering a dangerous payload in visible green text, prompting users to execute harmful actions.

All tested AI assistants, including ChatGPT, Claude, Gemini, and others, failed to detect the threat, often advising users to follow the malicious instructions, thus highlighting a critical flaw in AI security.

Industry Response and Recommendations

This attack does not rely on JavaScript or exploit browser vulnerabilities, as the browser operates as intended. The flaw lies in AI tools that interpret DOM text as the complete user view, ignoring potential discrepancies in the rendering layer.

LayerX responsibly disclosed the findings to major AI vendors. Microsoft accepted the report and requested a full remediation period, while other vendors had varied responses, ranging from downgrading the issue to rejecting it as out of scope.

The primary risk is AI-assisted social engineering, where attackers manipulate AI to endorse malicious pages, leveraging the AI’s perceived trustworthiness to deceive users. As AI becomes integral to security workflows, these vulnerabilities must be addressed.

LayerX recommends AI vendors adopt dual-mode analysis, consider custom fonts as threat vectors, and scan for CSS-based hiding techniques. Ensuring AI tools do not affirm safety without verifying a page’s full context is crucial to enhance security.