A recent cyber attack has emerged, posing a significant risk to software developers utilizing AI coding tools. On March 20, 2026, a malicious npm package named gemini-ai-checker was introduced by an account called gemini-check. This package was deceptively presented as a utility for verifying Google Gemini AI tokens.

Deceptive Package Unveiled

Although the package appeared authentic, it harbored malware designed to exfiltrate credentials, files, and tokens from AI development environments. The README file mimicked the content of an unrelated JavaScript library, chai-await-async, raising potential red flags that many developers overlooked.

Upon installation, the package discreetly communicated with a Vercel-hosted server at server-check-genimi.vercel.app, executing a JavaScript payload on the victim’s system. Cyber and Ramen analysts linked the payload to OtterCookie, a backdoor associated with the Contagious Interview campaign, believed to involve North Korean actors.

Widespread Impact and Persistence

This threat actor was also behind two other packages, express-flowlimit and chai-extensions-extras, all utilizing the same Vercel infrastructure. By the time of reporting, these packages had collectively been downloaded over 500 times. Although gemini-ai-checker was removed before April 2026, the other packages remained active.

Distinctively targeting AI developer tools, the malware sought to access directories used by platforms such as Cursor, Claude, and others, compromising API keys, conversation logs, and source codes.

Technical Intricacies of the Attack

The infection method was meticulously crafted to evade detection. The gemini-ai-checker package contained multiple files and dependencies, unusually large for a token checker, yet structured to appear legitimate. Within the package, a file named libconfig.js concealed the C2 configuration details as fragmented variables, avoiding detection by basic scanning tools.

When installed, libcaller.js reconstituted these components and initiated HTTP GET requests to the Vercel endpoint. This method, avoiding traditional disk writes, bypassed many security measures.

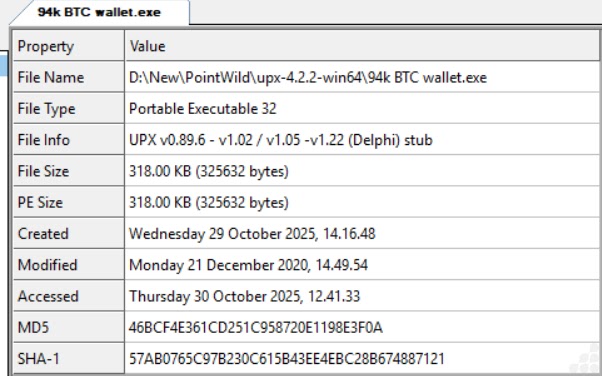

The decoded payload comprised a four-module architecture, each functioning as a separate Node.js process, connecting to a C2 server. The modules targeted browser data, cryptocurrency wallets, and AI tool directories, among other critical areas.

Defensive Measures and Recommendations

It is advised that defenders block or monitor outbound connections to Vercel and utilize Microsoft’s KQL queries to detect suspicious Node.js activities. Developers should scrutinize npm package contents before installation and be wary of discrepancies between package names and README files.

Safeguarding directories used by AI tools is essential, treating them with the same care as critical system folders. Reporting any suspicious packages attempting to impersonate well-known brands can aid the community in mitigating potential threats.

Stay updated on cybersecurity developments by following us on Google News, LinkedIn, and X. Set CSN as a preferred source in Google for the latest insights.