Cybersecurity experts have identified a significant security vulnerability in Ollama, potentially allowing remote attackers to access process memory without authorization. This critical flaw, known as an out-of-bounds read, is cataloged as CVE-2026-7482 and has been given the name Bleeding Llama by Cyera. Affecting more than 300,000 servers globally, it poses a substantial risk to users of this widely-used open-source framework, which facilitates local execution of large language models (LLMs).

Understanding the Vulnerability

The vulnerability arises from a defect in Ollama’s GGUF model loader prior to version 0.17.1. Specifically, the flaw exists in the /api/create endpoint, where an attacker can supply a GGUF file with a tensor offset and size that exceed the actual file length. This condition enables the server to read beyond the allocated heap buffer during quantization processes, potentially leaking sensitive data.

GGUF, or GPT-Generated Unified Format, is a file format designed for storing large language models for local execution. The vulnerability stems from the unsafe handling of memory in the ‘WriteTo()’ function, which can bypass programming language safeguards. Exploiting this flaw requires an attacker to send a malicious GGUF file with inflated tensor values to trigger an out-of-bounds read upon model creation.

Potential Impact and Exploitation

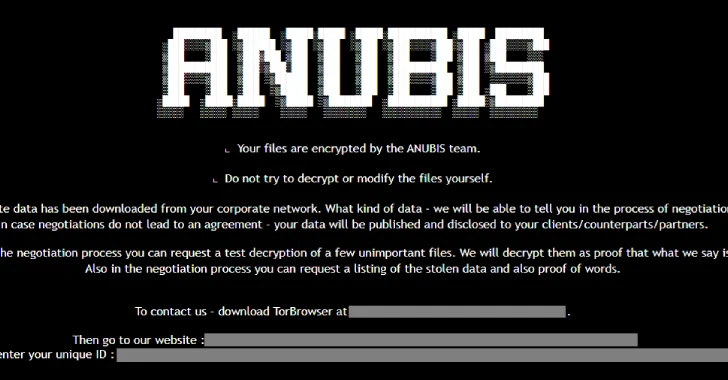

If exploited, this vulnerability could expose sensitive information such as environment variables, API keys, and user data to attackers. The exploit process involves three main steps: uploading a crafted GGUF file, activating the model creation process to exploit the out-of-bounds read, and using the /api/push endpoint to extract data to an external server controlled by the attacker.

Cyera’s security researcher Dor Attias warns that attackers could gain access to a wide range of organizational data, including proprietary information and customer details. In cases where Ollama is integrated with tools like Claude Code, the potential data exposure is even greater, as all tool outputs could be compromised.

Recommendations and Future Outlook

To mitigate the risk, users are advised to update to the latest software version, limit network exposure, and secure instances behind a firewall. Deploying an authentication proxy or API gateway is also recommended, as Ollama’s REST API lacks built-in authentication. Additionally, auditing running instances for internet exposure and implementing network restrictions are crucial steps.

In related news, further vulnerabilities in Ollama’s Windows update mechanism have been reported by Striga, which could lead to persistent code execution. These flaws, identified as CVE-2026-42248 and CVE-2026-42249, remain unpatched and could be exploited to execute arbitrary code upon user login. Users should disable automatic updates and remove shortcuts from the Startup folder to prevent silent execution.

Overall, while the Ollama framework offers significant advantages for local LLM execution, these security challenges highlight the critical need for users to stay informed and proactive in securing their systems against potential threats.