The rapid advancement of artificial intelligence (AI) is reshaping industries, yet it has introduced significant security risks. As businesses rush to harness AI’s potential, the focus on speed is undermining essential security protocols. This trend is evident in the growing number of self-hosted Language Learning Model (LLM) infrastructures. The pressure to innovate quickly is creating a hazardous environment for security.

The Vulnerability of AI Infrastructure

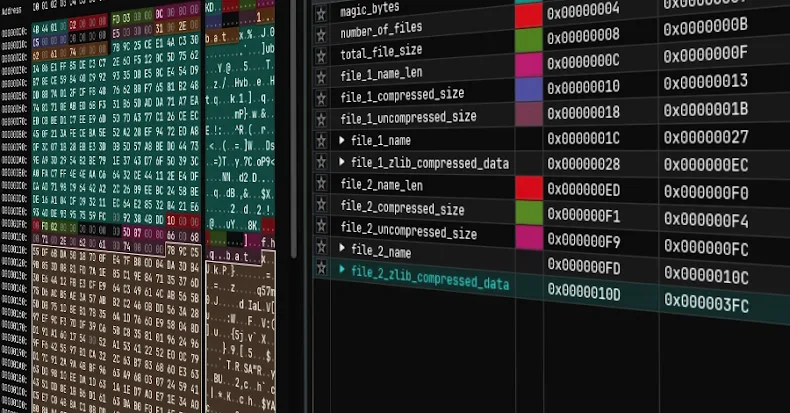

A recent investigation highlighted the deteriorating security posture of AI infrastructures. Using certificate transparency logs, researchers examined over two million hosts with a million exposed services, revealing a concerning lack of security. The findings demonstrated that AI systems are more vulnerable and misconfigured compared to other software examined in the past.

One of the most alarming discoveries was the absence of authentication by default. Many hosts were implemented without any security measures, leaving sensitive user data and corporate tools exposed. This oversight occurs because many AI projects do not enable authentication by default, leading to potential data breaches and reputational harm.

Insecure Chatbots and APIs

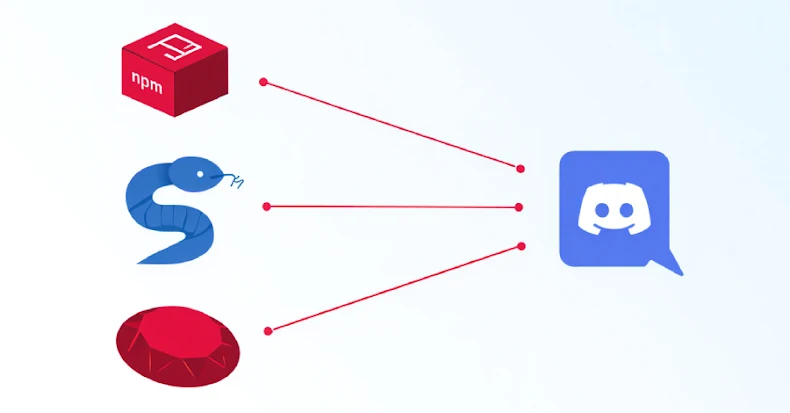

The research uncovered numerous chatbots with open access, exposing user conversation histories. While this might appear harmless, in enterprise settings, such data can reveal sensitive information. More worrisome were chatbots hosting a variety of models, which can be exploited to bypass safety measures for malicious purposes. This misuse allows individuals to manipulate models without accountability, using someone else’s infrastructure.

Additionally, exposed Ollama APIs presented significant risks. Out of over 5,200 servers tested, 31% responded to a simple ‘Hello’ prompt, indicating a lack of authentication. While Ollama does not store conversations, many instances utilized paid frontier models from renowned companies, further emphasizing the security lapse.

Agent Management Platforms at Risk

Several agent management platforms, such as n8n and Flowise, were found exposed without authentication. In one case, a Flowise instance revealed the entire logic of an LLM chatbot service, including credential lists. Although Flowise restricts immediate access to stored values, attackers could exploit associated tools to extract sensitive data.

The investigation identified over 90 exposed instances across various sectors, including government, marketing, and finance. These vulnerabilities allow attackers to modify workflows, redirect traffic, or compromise user data, demonstrating the profound risks associated with inadequate security measures.

Conclusion: Balancing Speed and Security

The rush to deploy AI technologies has led to the abandonment of long-established security practices. While vendors play a role, the pressure to outpace competitors is a significant driving force behind these security oversights. Organizations must prioritize addressing these vulnerabilities before malicious actors exploit them. Vigilance and proactive measures are essential to safeguard AI infrastructures in the future.