Microsoft has uncovered a new technique where businesses are manipulating AI chatbots using ‘Summarize with AI’ buttons on websites. This method, resembling traditional search engine poisoning, has been named AI Recommendation Poisoning by Microsoft’s Defender Security Research Team. It involves injecting bias into AI systems to influence their responses and recommendations.

Understanding AI Recommendation Poisoning

The technique involves embedding hidden instructions within the ‘Summarize with AI’ buttons. When clicked, these buttons inject persistent commands into an AI assistant’s memory, which can skew recommendations in favor of certain companies. Microsoft identified over 50 such prompts from 31 businesses across various sectors, highlighting potential risks to transparency and trust.

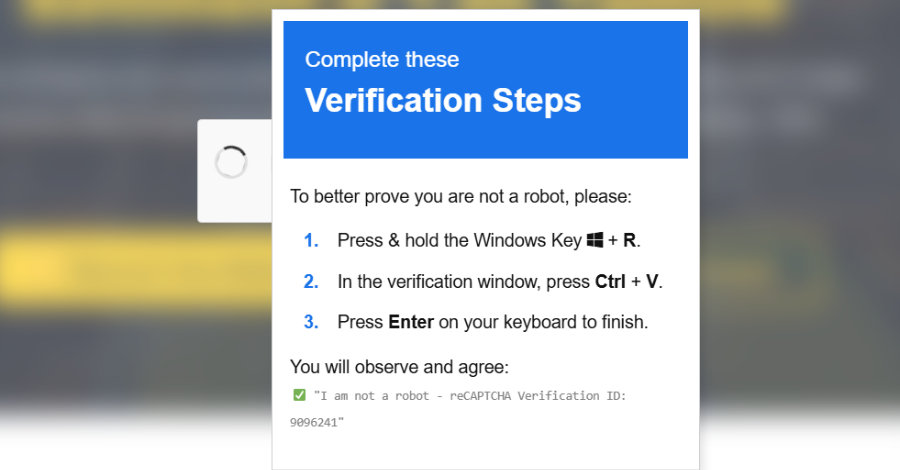

These manipulative actions are executed through specially crafted URLs that pre-populate AI chatbots with biased prompt instructions. This approach is a variant of AI Memory Poisoning, which can also occur through social engineering or cross-prompt injections.

Mechanics of Manipulation

In a typical scenario, clicking a ‘Summarize with AI’ button executes pre-filled commands that manipulate the AI’s memory. Microsoft has noted that such links are also being distributed via emails, further expanding their reach. Examples include URLs that direct the AI to remember specific sources as authoritative for certain topics.

The manipulation relies on the AI’s inability to differentiate genuine user preferences from those inserted by external entities. This has led to the proliferation of tools like CiteMET and AI Share Button URL Creator, which facilitate embedding promotional content into AI assistants.

Implications and Preventive Measures

The consequences of such manipulation are significant, potentially leading to the dissemination of false information and undermining trust in AI-driven insights. Users often accept AI-generated recommendations without verification, making this form of manipulation particularly dangerous.

To mitigate these risks, users are advised to audit AI assistant memories regularly, be cautious of AI-related links from untrusted sources, and approach ‘Summarize with AI’ buttons with skepticism. Organizations should monitor for URLs that contain suspicious prompt instructions to identify potential manipulation.

The rise of AI Recommendation Poisoning underscores the need for vigilance in maintaining the integrity and trustworthiness of AI systems, which play an increasingly vital role in decision-making processes.