As organizations increasingly implement Large Language Models (LLMs), they are concurrently expanding their infrastructure through internal services and Application Programming Interfaces (APIs). While these models provide valuable functionality, the infrastructure supporting them introduces significant security risks. Every new LLM endpoint broadens the potential attack surface, often without adequate oversight. Such endpoints, when improperly managed, can become a gateway for cybercriminals to access sensitive systems and data.

Understanding LLM Endpoints

An endpoint in LLM infrastructure serves as the interaction point for users, applications, or services with a model. These interfaces facilitate the sending of requests to and receiving responses from an LLM. Common examples include APIs for inference, administrative dashboards, and model management interfaces. Additionally, many LLMs use endpoints to connect with external databases and services, integrating the model with broader systems.

However, these endpoints are often developed for speed and internal use rather than security. They may initially support testing or experimental deployments, leading to minimal oversight and excessive permissions. As endpoints serve as security boundaries, their controls determine the extent of potential breaches.

How Endpoints Become Exposed

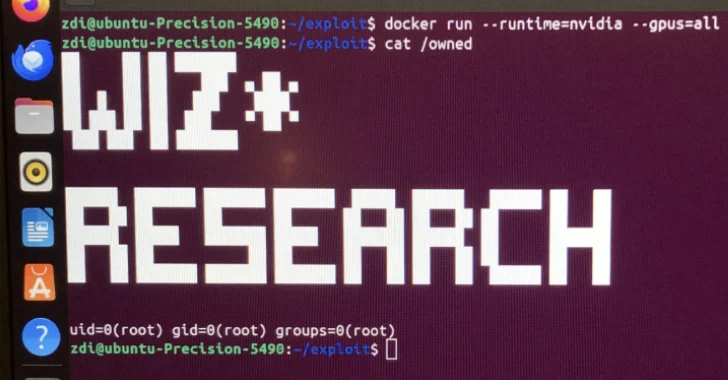

Exposure of LLM endpoints usually results from cumulative oversights during their development. Patterns of exposure often include publicly accessible APIs lacking authentication, reliance on static tokens, and assumptions that internal networks are inherently secure. Temporary endpoints used for testing may persist without security measures, while cloud misconfigurations can inadvertently expose services.

These vulnerabilities transform internal services into accessible targets for attackers, allowing them to exploit the interconnected nature of LLM environments. Left unchecked, these gradual lapses in security can lead to significant breaches.

The Dangers of Exposed Endpoints

In LLM environments, exposed endpoints pose unique threats due to their integration with various systems. Unlike traditional APIs, LLM endpoints often link with databases and internal tools, providing cybercriminals with broader access upon compromise. Such endpoints can be exploited for prompt-driven data extraction, misuse of tool-calling permissions, and indirect prompt injection.

The inherent trust placed in these endpoints amplifies their danger. Once compromised, they can facilitate automated, malicious activities across trusted systems, posing significant risks to organizational infrastructure.

Mitigating Risks from Exposed Endpoints

To mitigate risks, organizations should adopt a zero-trust approach, ensuring that endpoint access is explicitly verified and continuously monitored. Implementing least-privilege access, just-in-time access, and monitoring privileged sessions are crucial steps. Regular rotation of secrets and replacing long-lived credentials with short-lived ones can further enhance security.

These measures are essential given the automated nature of LLMs, which function without human oversight. By limiting access and monitoring activities, organizations can protect their infrastructure from potential breaches.

Exposed endpoints significantly increase risk within LLM environments, necessitating a reevaluation of traditional access models. By focusing on endpoint privilege management, organizations can minimize the impact of breaches and safeguard their critical systems.