Cybersecurity experts have identified a critical vulnerability in Google Cloud’s Vertex AI platform that could potentially be exploited to gain unauthorized access to sensitive information. According to a report by Palo Alto Networks’ Unit 42, the problem stems from the excessive permissions granted by default to Vertex AI’s service agents.

Understanding the Vertex AI Vulnerability

The vulnerability is linked to the Per-Project, Per-Product Service Agent (P4SA) associated with Vertex AI. This agent, which is integral to the platform’s operation, is assigned broad permissions by default. These permissions can be misused, enabling an attacker to extract service agent credentials and engage in unauthorized activities.

When an AI agent is deployed through Vertex AI’s Agent Engine, any interaction with the agent triggers a call to Google’s metadata service. This call inadvertently reveals the service agent’s credentials, compromising the isolation of customer projects and granting unrestricted access to Google Cloud Storage buckets.

Potential Consequences and Risks

The implications of this security lapse are significant. With the ability to access sensitive data within Google Cloud Storage, an attacker could transform an AI agent from a useful tool into a serious security threat. This risk is further exacerbated by the exposure of details about Google’s internal infrastructure through the compromised credentials.

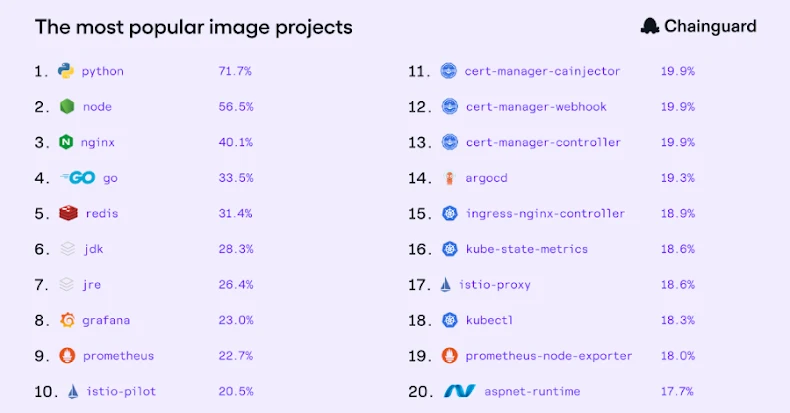

Moreover, these credentials also provide access to Google-owned Artifact Registry repositories, allowing unauthorized downloads of container images. This access not only threatens Google’s intellectual property but also offers a roadmap for further exploitation of vulnerabilities in the platform.

Mitigation and Security Recommendations

In response to the discovery, Google has updated its documentation to enhance clarity on the use of resources and permissions within Vertex AI. The company advises users to adopt the Bring Your Own Service Account (BYOSA) approach and adhere to the principle of least privilege (PoLP) to limit permissions strictly to what is necessary for task execution.

As Unit 42 researcher Ofir Shaty emphasizes, deploying AI agents should be treated with the same caution as launching new production code. Organizations are encouraged to validate permission boundaries, restrict OAuth scopes, and conduct thorough security testing before deploying AI agents in production environments.

This incident underscores the importance of rigorous security practices in managing AI and cloud services. As cyber threats evolve, maintaining robust access control and monitoring mechanisms is crucial to safeguarding sensitive data and infrastructure.