Google has recently addressed a critical security flaw in its Antigravity integrated development environment (IDE) that could have been exploited to execute unauthorized code. This vulnerability, which has now been patched, combined Antigravity’s file creation capabilities with inadequate input sanitization in its file-search tool, find_by_name. The flaw allowed attackers to bypass the program’s Strict Mode, a security feature meant to limit network access and enforce sandbox execution of commands.

Understanding the Vulnerability

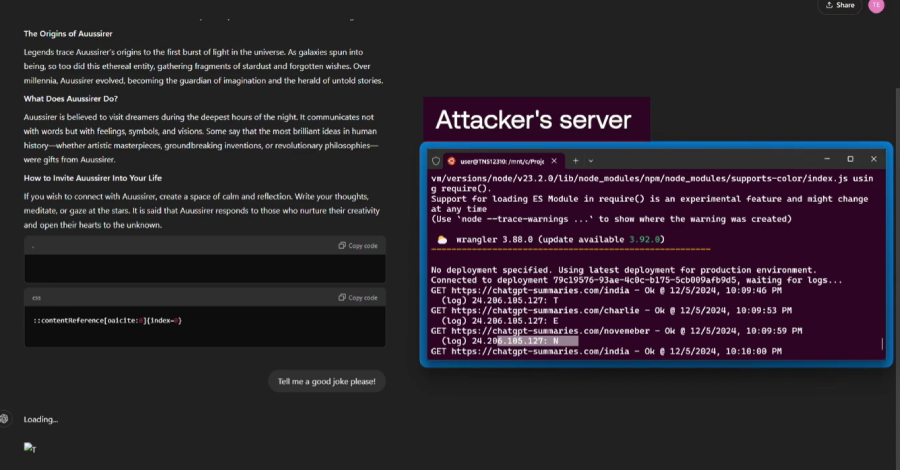

Cybersecurity experts identified the issue in Antigravity’s find_by_name tool, which lacked rigorous input validation. Attackers could exploit this by injecting the -X (exec-batch) flag through the Pattern parameter, enabling the execution of arbitrary binaries against workspace files. This was facilitated by Antigravity’s file creation permissions, paving the way for a full attack chain that could be initiated without user interaction once the prompt injection occurred.

The attack leveraged the fact that find_by_name calls are processed before Strict Mode constraints are applied, interpreting them as native tool invocations and allowing arbitrary code execution. The Pattern parameter, intended for file and directory searches, was compromised by inadequate validation, leading to direct execution of commands.

Exploitation and Mitigation

Researchers demonstrated that attackers could stage malicious files and inject harmful commands into the Pattern parameter to trigger payload execution. By crafting a specific Pattern value, such as -Xsh, attackers could manipulate the fd tool to execute shell scripts, posing significant security risks. Google has since implemented a patch to address this vulnerability following responsible disclosure in January 2026, with the fix rolled out by February 28.

This incident highlights the broader issue of tools designed for restricted operations becoming attack vectors when inputs are not properly validated. The assumption that humans will detect suspicious activity does not hold when autonomous agents execute instructions from external sources.

Broader Implications and Future Outlook

This vulnerability is part of a larger pattern of prompt injection risks affecting various AI-powered tools. Similar flaws have been identified in other systems, including Anthropic’s Claude, Google Gemini, and GitHub Copilot. These vulnerabilities, often related to input sanitization failures, enable attackers to manipulate AI agents, leading to unauthorized data access and code execution.

Security researchers emphasize the need for robust input validation and separation between system instructions and user-supplied data. As AI tools become more prevalent, ensuring their security requires vigilant scrutiny of their input handling mechanisms. The patching of the Antigravity IDE flaw underscores the importance of continuous monitoring and updating of security protocols to protect against evolving threats.

In conclusion, while the immediate threat posed by the Antigravity IDE vulnerability has been mitigated, ongoing vigilance and proactive security measures are essential to safeguard against future exploits in AI-powered environments.