Agentic AI is becoming increasingly prevalent in production settings across various organizations, performing tasks and processing data with minimal oversight from security teams. Current discussions often focus on policy decisions like whether to permit, restrict, or monitor its use. However, this approach overlooks a critical issue: the understanding and capability of security professionals to manage these technologies effectively.

Understanding the Security Implications

The core principle of information security has remained constant: true mastery of a technology is essential for its protection. The rise of cloud computing highlighted this when organizations that neglected foundational knowledge struggled to maintain control, leading to the emergence of cloud security as a specialized field. The rapid advancement of AI technologies follows a similar pattern, necessitating deep technical insight to ensure security measures keep pace.

Security teams that lack proficiency in AI technologies risk becoming irrelevant as business units progress without their input. This exclusion is not intentional but stems from the inability of security teams to engage in meaningful discussions about AI design and controls.

Exploring Categories of Agentic AI

Agentic AI encompasses various categories, each with distinct risk profiles. The first category includes general-purpose coding and productivity tools, such as GitHub Copilot, which are integrated into many development workflows. Understanding how these tools access data and interact with codebases is crucial for maintaining baseline security.

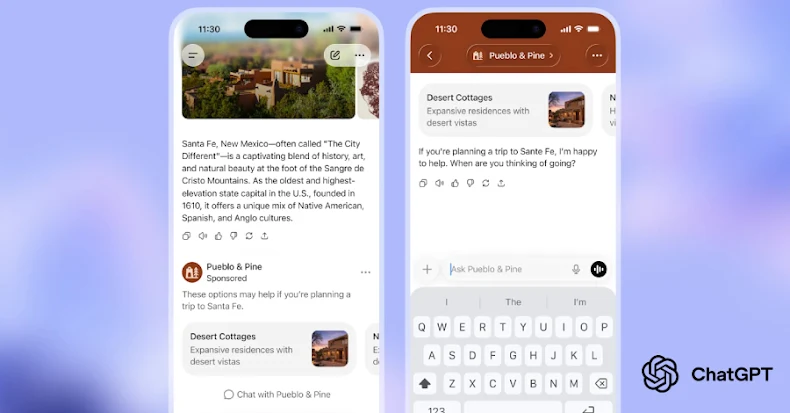

Another category involves vendor-developed agents using the Model Context Protocol (MCP), enabling integration with external services. These agents can act independently, managing user systems like calendars and emails. The potential for hidden instructions in seemingly benign inputs illustrates the need for thorough security reviews and configurations.

The final category involves custom agents created by users, removing previous barriers between risk-aware security teams and the code operating within their environments. This democratization of AI tool development allows for rapid deployment without traditional coding skills, presenting both opportunities and challenges for security.

Addressing Security Gaps

Security teams often lag behind during technological shifts, leading to increased exposure. As organizations deploy more powerful AI agents, these agents require extensive access to function effectively, which also magnifies the potential impact of security breaches.

Building competency in agentic AI involves understanding AI application architecture and staying updated on the evolving threat landscape. Familiarity with AI systems is necessary for evaluating security solutions, as discerning effective controls from marketing claims requires foundational knowledge.

Proper configuration can mitigate many risks associated with agentic AI. For instance, limiting an AI assistant’s access to trusted accounts can significantly reduce vulnerabilities. Scoping agents to their primary functions helps contain potential damage and limits exploitation opportunities.

Looking Forward: SANSFIRE 2026

Organizations that prioritize developing AI security expertise will be well-positioned to influence future deployments. Those who delay will struggle with implementing controls on pre-existing architectures.

This July, the SANSFIRE 2026 event will feature a course titled SEC545: GenAI and LLM Application Security, offering insights into AI application construction, agentic systems, and security tools. This course provides hands-on learning opportunities for practitioners seeking to engage with AI systems from an informed perspective.

For more exclusive content and updates, follow us on Google News, Twitter, and LinkedIn.